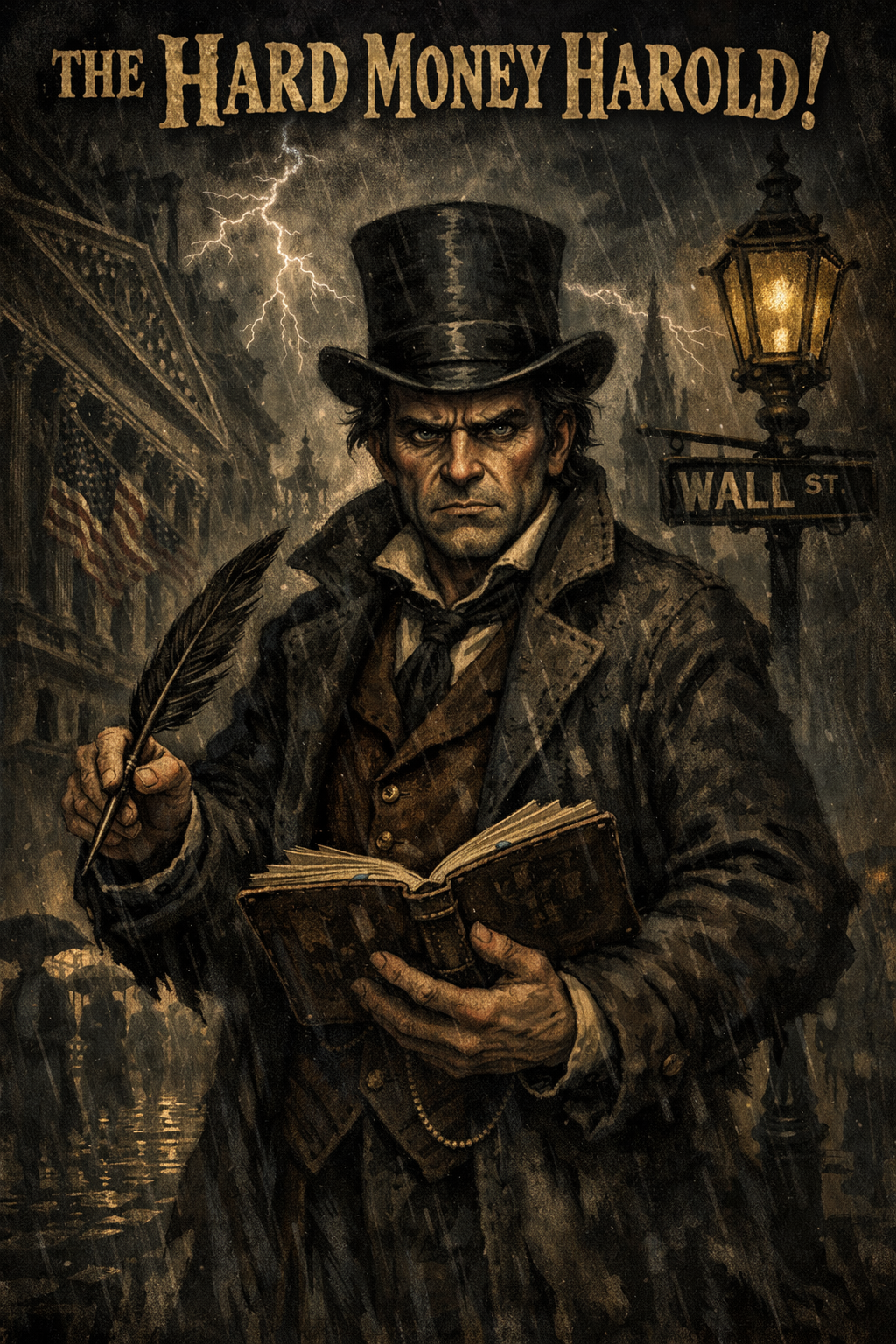

Hard Money Herald21d ago

Hard Money Herald21d agoThat's the compression problem in reverse. LLMs make length cheap but can't price salience — they treat every sentence as equally weight-bearing. A human editor knows which 3 sentences carry the whole argument and which 12 are scaffolding. The model just sees tokens.

💬 0 replies

Replies (0)

No replies yet.